Air/shots

Discovering a workflow for app screenshots

Air/shots is an internal initiative from our design tools team. A feature on air/shots originally published in Fast Company earlier this year.

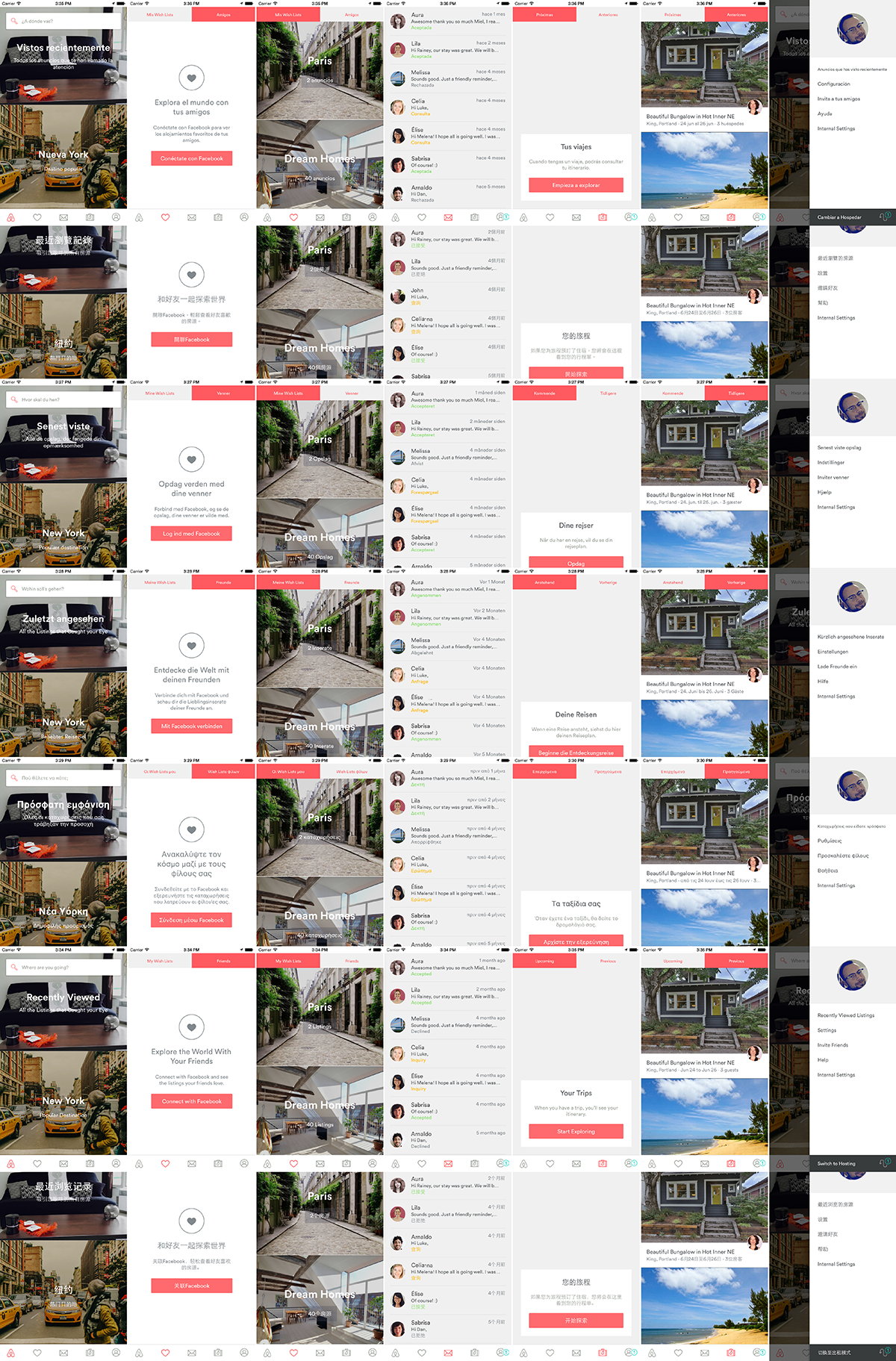

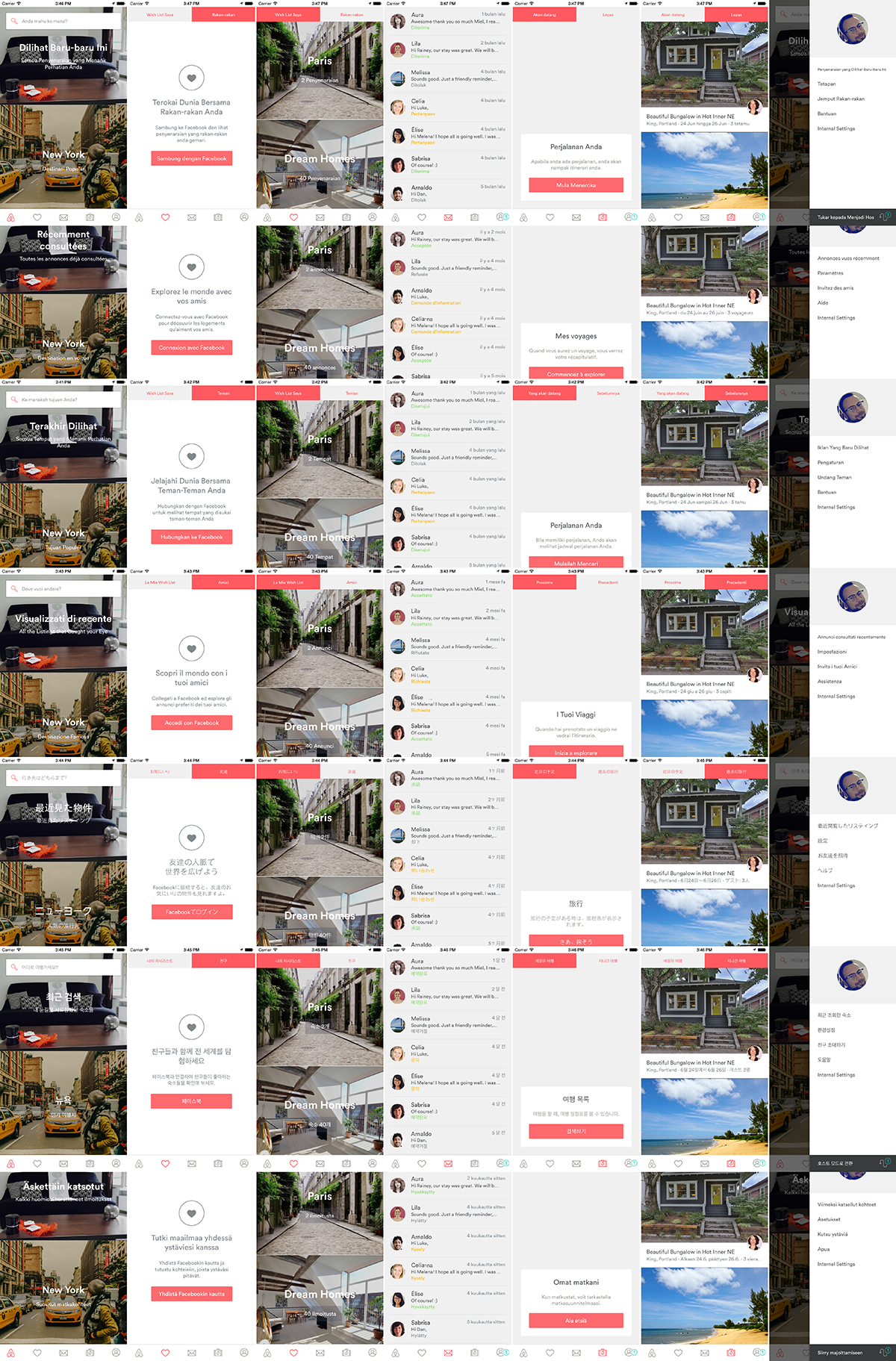

Airshots in action

I’m a Design Technologist. To be honest, I’m not sure exactly what that means. While my official job description is to create tools for the Design team at Airbnb, I also try to keep in mind how the tools I develop can help the entire company.

One day our VP of Design, Alex Schleifer, mentioned in passing that it would be cool if we had some sort of tool where designers could see all of the different views of our app. This was exactly the type of project I felt could affect several departments within Airbnb. Intrigued, I whipped up a quick design in Photoshop to collect my ideas.

First Draft / North Star Design

In doing this, two main groups who frequently need to view our app on multiple devices came to mind: customer service agents and designers. With a tool like this, agents could walk hosts and guests through updating their profile pictures step-by-step, without logging in or tracking down a shared device; Designers could do the same, but for the purposes of testing a design (in multiple languages) or finding an existing design in need of some love. Having an easily accessible library of screenshots would greatly reduce the need to work across dozens of real devices.

Dream features of this new tool included the ability to upvote flows and leave comments. Common host and guest questions would float to the top, reducing the need for agents to search for specific screens. With a comments feature, agents could leave links to relevant help articles they had recommended for each question. Designers could use the same upvoting feature to prioritize designs they felt needed attention. Work flows could be streamlined across both disciplines.

The first draft design was done. The next step was collecting screenshots. I turned to my trusty friend (and the source of all knowledge I’ve used in my professional career): The INTERWEBS.

How I learned most everything I know

How I learned most everything I know

I believe that everyone has a technique for “Googling.” I prefer to start off conversationally, eventually building upon a central question. Some examples of my early searches are:

How do I take a multiple screenshots?

How would you take a screenshot?

How would you take a screenshot on iOS?

How would you take a screenshot on iOS in batches?

How would you take a screenshot on iOS in batches of my app?

Most of the time this leads nowhere fast. Still, I make sure to click on every link that interests me, usually using a Chrome extension called Linkclump. Afterwards, I use vimium to cycle through 20 or so open tabs and eliminate erroneous information. After reading these links I returned to Google, awash with rows of purple links I had already visited. Finally, I saw a word I hadn’t seen before: Emulator.

This led me to one final search:

How would you take a screenshot on iOS in batches of my app with an emulator?

Up until this point in my career I had never really worked with xCode, the command line or an emulator. However, being self-taught, I knew that with some research I would figure out enough to talk about using these tools. It took a full morning of searching to find my first lead: Snapshot. A particularly clever fellow named Felix Krause had developed an open-source toolchain called Fastlane. He created it to help test and deploy iOS apps. Snapshot is the part of the toolchain that generates localized iOS screenshots for different device types to upload to the App Store.

The part of Snapshot that really caught my eye was the exported result:

Sample output of Snapshot Output

Sample output of Snapshot Output

After creating an xCode build from our GitHub repository and learning the ropes of terminal, Snapshot wasn’t too hard to finish setting up. This is the first test flow I ran to show Alex:

The first run on our app using Snapshot

Jackpot! After seeing how easy it was to produce a set of screenshots across several languages and devices it became clear that this was something worth investigating further. To produce similar results along the lines of my initial Photoshop design would require firing up an emulator for each device and changing the language each time… Or, conversely, tracking down individual test devices and taking each screenshot manually.

At this point I was pulled off of my work on the Production Design team to focus on this project and investigate the possibilities.

One of Snapshot’s main limitations is that it only works for iOS. My manager and our Director of Design Ops, Adrian Cleave, recommended that I try using the Appium framework instead.

While Appium is used mainly for mobile performance testing, it also comes with the ability to take screenshots. After navigating a kind of tricky setup process, I found driving the app via the Appium Ruby Console to be much easier. The two main actions I needed for my purposes were “tap” and “text entry”. Using these two actions, I wrote Ruby scripts to collect screenshots of our most common flows.

Running the first script was a success. Other problems arose when we tried to repeat it.

First of all, the Airbnb apps change constantly. Each time a new element is added or removed the entire structure of the views’ xpath changes. All of them. On top of this, the XY coordinate of a button or element may change depending on the language it’s displayed in. Additionally, when using xpaths, the delay needed to allow for the entire XML Tree structure to display is longer than desired, taking three to four seconds.

Since I began working on this project, the app’s content had shifted due to experiments run by our iOS team. To overcome this problem they had built in a switch that allows for experiments to be disabled. After I got the scripts running successfully, Adrian helped me reduce the amount of manual work I was doing with shell script variables.

Personally, I don’t mind doing it the “long way”, at least in the beginning. I know that there are ways to loop and improve code that is repeated, but I think of that as a secondary step. I like to have things working the long way first and then figure out how to improve it later, rather than tackling both issues together. I feel that this approach makes it easier to identify problems in the script or code.

After a couple weeks of creating scripts and outputting them, I started to wonder: How could this be faster? Creating a script wasn’t necessarily hard, but it was a bit scary if you weren’t used to coding. Only certain variables changed when using XY coordinates, which proved to be the most reliable method that worked upon repetition. Realizing this, I crafted a Photoshop script that would output a Ruby script that would be run by Appium. This opened up script creation widely– to anyone who knew how to use Photoshop.

Once it was clear what we required and what parts of our workflow needed improvement, Adrian sourced Appium specialists to help build a scalable and more automatable framework. This fancy new framework includes features like:

Once it was clear what we required and what parts of our workflow needed improvement, Adrian sourced Appium specialists to help build a scalable and more automatable framework. This fancy new framework includes features like:

- The ability to continue running a set of scripts if one or more has failed

- Using Page Objects to group xpaths and resource IDs in logical groups

- Defining extendable scripts with methods that allow for most of the scripts to be used across Android and iOS

- Using methods to define common actions that can be reused, such as logging in

- Reducing the number of scripts – allowing for code to be reused across platforms

To view air/shots, Adrian created a custom front-end web interface and integrated Pourover.js, a library created by the New York Times. It allows thousands of images to be filtered quickly without calling back to a server.

Every step of this process was not only an exercise in how this tool could benefit the Design team, but how it might help the entire company. After the internal release, other departments found ways they could use air/shots. The Engineering department showed interest in running flows on experiments before they went live to help with debugging. Our Localization team distributed air/shots to proofreaders, helping them discover which translations needed improvement across the 27 languages we support. Along with feature suggestions gained through internal feedback, we plan to add wishlist features like upvoting and commenting.

I’m currently updating our iOS app with accessibility identifiers that will allow for scripts to be reused across future updates. As we perfect air/shots, we plan to release it as an open-source workflow – a set of existing frameworks used in combination to achieve our goal. I’m interested to see how the design community uses this workflow and how users’ suggestions will influence the growth of air/shots.

I’m not an engineer by any means, but I do have a knack for connecting existing tools to achieve the results I want. Maybe that is what being a Design Technologist is about. One of my favorite quotes by Arthur Ashe comes to mind: “ Start where you are, use what you have, do what you can.”

Special shoutouts to: Alfred App and Google Searching. Without you this project would have not been possible. <3